GaN Puts the “D” in LiDAR for Autonomous Vehicles… Enhancing the “Eyesight” of Self-Driving Cars

GaN Talk – Rick Pierson

Dec 05, 2017

Did you see that car? The one with what looks like antlers on the top? Most people would be hard-pressed to miss a self-driving car navigating about public roads. Most autonomous vehicles, or self-driving cars as they are also known, are outfitted with a myriad of sensors, cameras, and even lasers that serve a critical function – providing information about the vehicle’s surroundings. These sensors and cameras are one means of identifying pedestrians, bicycle riders, lane lines, street signs, lights, traffic cones, and other visual details that are important for safe driving.

But, look closely at the car in figure 1 and you will see a laser system called LiDAR (light distancing and ranging) atop the vehicle that provides the “eyes” for the vehicle. This LiDAR system rapidly fires a steered beam of light and assesses the amount of time it takes for the beam to return, as well as the direction from which it comes. In doing so, LiDAR provides a high-resolution, 360-degree, three-dimensional image of what is surrounding the vehicle.

Figure 1: Self-driving car with LiDAR unit atop the car

Why use LiDAR for autonomous vehicles?

Today LiDAR is used in conjunction with advanced driver assistance systems (ADAS) sensors for automotive active safety systems designed to avoid collisions and assist with automatic emergency braking. These ADAS functions are leading the way for self-driving vehicles by incorporating LIDAR’s imaging information, but as system costs continue to decline LiDAR will become the primary “eyes on the road.”

In addition to providing basic image information, LiDAR serves as a key redundancy mechanism for cameras, radar and ultrasonic sensors by providing a full 360-degree view to monitor a car’s environment. By supporting cameras and radars with object recognition, distance estimation and elimination of false positives, LiDAR increases the confidence of automotive companies to deploy cars with progressively greater levels of autonomy.

How does LiDAR work?

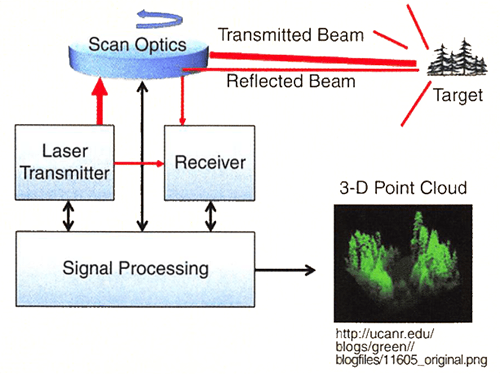

Simply stated, LiDAR 3-D imaging systems generate an intense, extremely short pulse of light from a laser, which is reflected from the various objects and returned to a LiDAR sensor.

The LiDAR sensor, in addition to the x and y coordinates, uses the distance of an object and measures it by sensing the time it takes for the pulse to return, creating a 3-D image (Figure 2). With the speed of light being approximately 0.3 m/ns, every nanosecond of error in the system results in a 0.3 m error in the distance measurement. Thus, the faster the LiDAR operates, the higher the image resolution of the surroundings. This is a technical challenge – and this is where GaN comes in…

Figure 2: How LiDAR works

GaN technology – world’s fastest switch is key to better LiDAR images

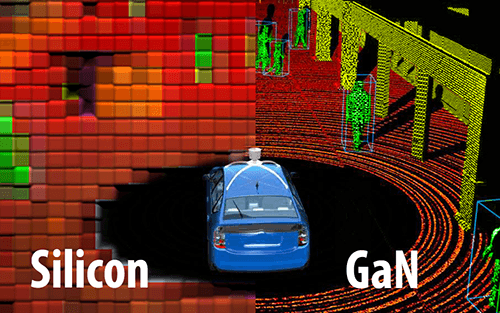

As noted, the speed of the laser is critical to getting the extremely high-resolution images required for safe autonomous transport. GaN technology enables the laser signal to be fired at far higher speeds than comparable silicon MOSFET components. Figure 3 shows how the GaN devices enable the creation of a much higher resolution 3-D map for autonomous vehicle applications.

Figure 3: “Eyes on the Road:” LiDAR system with Silicon laser driver on the left vs. system with GaN laser driver on the right

The technical challenge at the root of triggering fast light beams for the LiDAR system is generating the current pulse needed to fire the pulse of light. To provide the resolution necessary to determine whether the object is a hazard to a vehicle travelling along a highway, the laser must ramp to its peak current in approximately one ns, and it must repeat this pulse with extreme accuracy in terms of delay time, switching times, on time, and current. As an example, for a range of 100 m, such as for automotive sensing, high current pulses are required. At the core of this triggering circuit is an extremely fast GaN transistor. The requirements on the transistor include a high peak current, extremely low capacitance, and stable thermal characteristics as the laser heats up the system. The 100 V eGaN® FETs EPC2016C and EPC2001C are leading components for this demanding application.

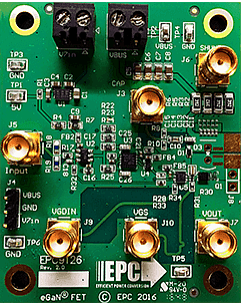

To accelerate the evaluation of these high speed GaN FETs in LiDAR systems, EPC offers two development boards. These boards are primarily intended to drive laser diodes to emulate LiDAR systems. The EPC9126 development board, shown in figure 4 features the EPC2016C and is capable of current pulses up to 40 A with pulse widths as low as 4 nanoseconds into a laser diode.

For even higher current and thus higher speed laser triggering, the EPC9126HC, with an EPC2001C 100 V eGaN FET, has a pulse current rating of up to 150 A for users needing higher current capability. The EPC9126HC can deliver 75 A pulses into a laser diode, with a pulse width as short as 5 ns.

Figure 4: EPC9126 a 100V, high current, pulsed LASER diode driver demo board highlighting the EPC2016C eGaN power FET capable of 40 A pulses into a laser diode with a total pulse-width of 4 ns (50% of peak).

The future of LiDAR

"It's no secret that lidar has emerged as one of the hottest laser-enabled technologies in recent memory, but what is surprising is how rapidly—a short 2 to 3 years—the technology went from Google-car curiosity to mainstream driver-assist implementation."

Alex Lidow, CEO & Co-Founder, Efficient Power Conversion

LiDAR technology is emerging as the leading technology to act as the “eyes” for self-driving cars. Perhaps the strongest indicator of this adoption is the growing lineup of automotive manufacturers who are investing in this technology – Toyota, Google, Lyft, Uber, General Motors, and Ford, to name a few. As LiDAR systems continue to become an increasingly reliable set of “eyes” and as system costs continue to decrease, their ubiquitous adoption will change our concepts of vehicle ownership, logistics, and the transportation industry. Perhaps, the next car you pass will have its “eyes” on the road , courtesy of a GaN-based LiDAR system!